Category: News

Knicks vencen 113-100 a Nets para su extender a 12 su racha de triunfos seguidos sobre Brooklyn

NUEVA YORK (AP) — Karl-Anthony Towns consiguió 37 puntos y 12 rebotes, Jalen Brunson anotó 27, y los Knicks de Nueva York vencieron 113-100 a los Nets de Brooklyn en el Barclays Center el lunes por la noche.

Towns atinó 14 de 20 desde el campo y acertó cada uno de sus seis tiros libres para llevar a los Knicks a su duodécima victoria consecutiva sobre Brooklyn.

La última derrota de Nueva York ante los Nets fue el 28 de enero de 2023, cuando fueron derrotados 122-115.

Mikal Bridges terminó con 16 puntos y Jordan Clarkson añadió 12 desde la banca para los Knicks.

Nueva York lanzó 45 de 88 desde el campo mientras limitaba a Brooklyn a 33 de 87 en tiros.

Noah Clowney anotó un récord personal de 31 unidades y Michael Porter Jr. tuvo 16 para Brooklyn, que tiene un récord de 0-8 en el Barclays Center esta temporada.

La última edición del enfrentamiento entre equipos de la misma ciudad estaba empatada a 51 tras la jugada de tres puntos de Terance Mann, de los Nets, en el primer minuto del tercer cuarto.

Bridges y Towns se combinaron para 23 tantos para ayudar a los Knicks a superar a sus rivales 38-27 con un 63.6% de acierto en tiros de campo, incluyendo seis de diez desde larga distancia, para tomar una ventaja de 89-75 en el último período.

Nueva York jugó sin Landry Shamet, quien sufrió un esguince en el hombro derecho durante el primer cuarto de la derrota del sábado en Orlando.

___

Deportes en español AP: https://apnews.com/hub/deportes

Chicago Bulls lose 143-130 to New Orleans, giving the Pelicans’ interim coach his first win

NEW ORLEANS — Zion Williamson tied a season high with 29 points and the New Orleans Pelicans defeated the Chicago Bulls 143-130 on Monday night to end a nine-game losing streak and win for the first time under interim coach James Borrego.

Saddiq Bey had 20 points and 14 rebounds, highlighted by a pair of late 3s to help New Orleans hold a lead that was 18 points with 5:15 left but fell to single digits in the last two minutes.

Fans stood to applaud as the Pelicans began to run down the clock for their first victory since Nov. 5 at Dallas, 10 days before the firing of coach Willie Green, when New Orleans was 2-10.

Borrego needed six games to get his first win, and it came with a balanced, high-scoring effort.

The Pelicans (3-15) had eight players with 10 or more points. Trey Murphy scored 20 points, Jose Alvarado had 16, Yves Missi had 14 points and 14 rebounds, Bryce McGowens scored 11 and Micah Peavy had 10.

Ayo Dosunmu scored 28 points and Coby White added 24 for the Bulls, who went 20 of 50 on 3-pointers. Josh Giddey added 21 points and Jalen Smith 13, while Tre Jones had 10 points and 11 assists off the bench.

The Pelicans led by as many as 22 points in the second quarter when Murphy made it 55-33 by converting a 3-point play on a reverse layup while being fouled by Smith.

After the Bulls had chipped away in the final minutes of the second quarter, Williamson hit a driving layup and added a finger-roll in the final seconds of the period to put the Pelicans up 74-58 at halftime.

Up next

Bulls: Visit the Charlotte Hornets on Friday night.

Pelicans: Host the Memphis Grizzlies on Wednesday night.

https://www.chicagotribune.com/2025/11/24/chicago-bulls-new-orleans-pelicans-interim-coach/

The Google TPU: The Chip Made For The AI Inference Era

The Google TPU: The Chip Made For The AI Inference Era

By UncoverAlpha

As I find the topic of Google TPUs extremely important, I am publishing a comprehensive deep dive, not just a technical overview, but also strategic and financial coverage of the Google TPU.

Topics covered:

The history of the TPU and why it all even started?

The difference between a TPU and a GPU?

Performance numbers TPU vs GPU?

Where are the problems for the wider adoption of TPUs

Google’s TPU is the biggest competitive advantage of its cloud business for the next 10 years

How many TPUs does Google produce today, and how big can that get?

Gemini 3 and the aftermath of Gemini 3 on the whole chip industry

Let’s dive into it.

The history of the TPU and why it all even started?

The story of the Google Tensor Processing Unit (TPU) begins not with a breakthrough in chip manufacturing, but with a realization about math and logistics. Around 2013, Google’s leadership—specifically Jeff Dean, Jonathan Ross (the CEO of Groq), and the Google Brain team—ran a projection that alarmed them. They calculated that if every Android user utilized Google’s new voice search feature for just three minutes a day, the company would need to double its global data center capacity just to handle the compute load.

At the time, Google was relying on standard CPUs and GPUs for these tasks. While powerful, these general-purpose chips were inefficient for the specific heavy lifting required by Deep Learning: massive matrix multiplications. Scaling up with existing hardware would have been a financial and logistical nightmare.

This sparked a new project. Google decided to do something rare for a software company: build its own custom silicon. The goal was to create an ASIC (Application-Specific Integrated Circuit) designed for one job only: running TensorFlow neural networks.

Key Historical Milestones:

2013-2014: The project moved really fast as Google both hired a very capable team and, to be honest, had some luck in their first steps. The team went from design concept to deploying silicon in data centers in just 15 months—a very short cycle for hardware engineering.

2015: Before the world knew they existed, TPUs were already powering Google’s most popular products. They were silently accelerating Google Maps navigation, Google Photos, and Google Translate.

2016: Google officially unveiled the TPU at Google I/O 2016.

This urgency to solve the “data center doubling” problem is why the TPU exists. It wasn’t built to sell to gamers or render video; it was built to save Google from its own AI success. With that in mind, Google has been thinking about the »costly« AI inference problems for over a decade now. This is also one of the main reasons why the TPU is so good today compared to other ASIC projects.

The difference between a TPU and a GPU?

To understand the difference, it helps to look at what each chip was originally built to do. A GPU is a “general-purpose” parallel processor, while a TPU is a “domain-specific” architecture.

The GPUs were designed for graphics. They excel at parallel processing (doing many things at once), which is great for AI. However, because they are designed to handle everything from video game textures to scientific simulations, they carry “architectural baggage.” They spend significant energy and chip area on complex tasks like caching, branch prediction, and managing independent threads.

A TPU, on the other hand, strips away all that baggage. It has no hardware for rasterization or texture mapping. Instead, it uses a unique architecture called a Systolic Array.

The “Systolic Array” is the key differentiator. In a standard CPU or GPU, the chip moves data back and forth between the memory and the computing units for every calculation. This constant shuffling creates a bottleneck (the Von Neumann bottleneck).

In a TPU’s systolic array, data flows through the chip like blood through a heart (hence “systolic”).

It loads data (weights) once.

It passes inputs through a massive grid of multipliers.

The data is passed directly to the next unit in the array without writing back to memory.

What this means, in essence, is that a TPU, because of its systolic array, drastically reduces the number of memory reads and writes required from HBM. As a result, the TPU can spend its cycles computing rather than waiting for data.

Google’s new TPU design, also called Ironwood also addressed some of the key areas where a TPU was lacking:

They enhanced the SparseCore for efficiently handling large embeddings (good for recommendation systems and LLMs)

It increased HBM capacity and bandwidth (up to 192 GB per chip). For a better understanding, Nvidia’s Blackwell B200 has 192GB per chip, while Blackwell Ultra, also known as the B300, has 288 GB per chip.

Improved the Inter-Chip Interconnect (ICI) for linking thousands of chips into massive clusters, also called TPU Pods (needed for AI training as well as some time test compute inference workloads). When it comes to ICI, it is important to note that it is very performant with a Peak Bandwidth of 1.2 TB/s vs Blackwell NVLink 5 at 1.8 TB/s. But Google’s ICI, together with its specialized compiler and software stack, still delivers superior performance on some specific AI tasks.

The key thing to understand is that because the TPU doesn’t need to decode complex instructions or constantly access memory, it can deliver significantly higher Operations Per Joule.

For scale-out, Google uses Optical Circuit Switch (OCS) and its 3D torus network, which compete with Nvidia’s InfiniBand and Spectrum-X Ethernet. The main difference is that OCS is extremely cost-effective and power-efficient as it eliminates electrical switches and O-E-O conversions, but because of this, it is not as flexible as the other two. So again, the Google stack is extremely specialized for the task at hand and doesn’t offer the flexibility that GPUs do.

Performance numbers TPU vs GPU?

As we defined the differences, let’s look at real numbers showing how the TPU performs compared to the GPU. Since Google isn’t revealing these numbers, it is really hard to get details on performance. I studied many articles and alternative data sources, including interviews with industry insiders, and here are some of the key takeaways.

The first important thing is that there is very limited information on Google’s newest TPUv7 (Ironwood), as Google introduced it in April 2025 and is just now starting to become available to external clients (internally, it is said that Google has already been using Ironwood since April, possibly even for Gemini 3.0.). And why is this important if we, for example, compare TPUv7 with an older but still widely used version of TPUv5p based on Semianalysis data:

TPUv7 produces 4,614 TFLOPS(BF16) vs 459 TFLOPS for TPUv5p

TPUv7 has 192GB of memory capacity vs TPUv5p 96GB

TPUv7 memory Bandwidth is 7,370 GB/s vs 2,765 for v5p

We can see that the performance leaps between v5 and v7 are very significant. To put that in context, most of the comments that we will look at are more focused on TPUv6 or TPUv5 than v7.

Based on analyzing a ton of interviews with Former Google employees, customers, and competitors (people from AMD, NVDA & others), the summary of the results is as follows.

Most agree that TPUs are more cost-effective compared to Nvidia GPUs, and most agree that the performance per watt for TPUs is better. This view is not applicable across all use cases tho.

A Former Google Cloud employee:

“If it is the right application, then they can deliver much better performance per dollar compared to GPUs. They also require much lesser energy and produces less heat compared to GPUs. They’re also more energy efficient and have a smaller environmental footprint, which is what makes them a desired outcome.

The use cases are slightly limited to a GPU, they’re not as generic, but for a specific application, they can offer as much as 1.4X better performance per dollar, which is pretty significant saving for a customer that might be trying to use GPU versus TPUs.” – source: AlphaSense

Similarly, a very insightful comment from a Former Unit Head at Google around TPUs materially lowering AI-search cost per query vs GPUs:

“TPU v6 is 60-65% more efficient than GPUs, prior generations 40-45%”

This interview was in November 2024, so the expert is probably comparing the v6 TPU with the Nvidia Hopper. Today, we already have Blackwell vs V7.

Many experts also mention the speed benefit that TPUs offer, with a Former Google Head saying that TPUs are 5x faster than GPUs for training dynamic models (like search-like workloads).

There was also a very eye-opening interview with a client who used both Nvidia GPUs and Google TPUs as he describes the economics in great detail:

“If I were to use eight H100s versus using one v5e pod, I would spend a lot less money on one v5e pod. In terms of price point money, performance per dollar, you will get more bang for TPU. If I already have a code, because of Google’s help or because of our own work, if I know it already is going to work on a TPU, then at that point it is beneficial for me to just stick with the TPU usage.

In the long run, if I am thinking I need to write a new code base, I need to do a lot more work, then it depends on how long I’m going to train. I would say there is still some, for example, of the workload we have already done on TPUs that in the future because as Google will add newer generation of TPU, they make older ones much cheaper.

For example, when they came out with v4, I remember the price of v2 came down so low that it was practically free to use compared to any NVIDIA GPUs.

Google has got a good promise so they keep supporting older TPUs and they’re making it a lot cheaper. If you don’t really need your model trained right away, if you’re willing to say, “I can wait one week,” even though the training is only three days, then you can reduce your cost 1/5.” – source: AlphaSense

Another valuable interview was with a current AMD employee, acknowledging the benefits of ASICs:

“I would expect that an AI accelerator could do about probably typically what we see in the industry. I’m using my experience at FPGAs. I could see a 30% reduction in size and maybe a 50% reduction in power vs a GPU.«

We also got some numbers from a Former Google employee who worked in the chip segment:

»When I look at the published numbers, they (TPUs) are anywhere from 25%-30% better to close to 2x better, depending on the use cases compared to Nvidia. Essentially, there’s a difference between a very custom design built to do one task perfectly versus a more general purpose design.”

What is also known is that the real edge of TPUs lies not in the hardware but in the software and in the way Google has optimized its ecosystem for the TPU.

A lot of people mention the problem that every Nvidia “competitor” like the TPU faces, which is the fast development of Nvidia and the constant “catching up” to Nvidia problem. This month a former Google Cloud employee addressed that concern head-on as he believes the rate at which TPUs are improving is faster than the rate at Nvidia:

“The amount of performance per dollar that a TPU can generate from a new generation versus the old generation is a much significant jump than Nvidia”

In addition, the recent data from Google’s presentation at the Hot Chips 2025 event backs that up, as Google stated that the TPUv7 is 100% better in performance per watt than their TPUv6e (Trillium).

Even for hard Nvidia advocates, TPUs are not to be shrugged off easily, as even Jensen thinks very highly of Google’s TPUs. In a podcast with Brad Gerstner, he mentioned that when it comes to ASICs, Google with TPUs is a “special case”. A few months ago, we also got an article from the WSJ saying that after the news publication The Information published a report that stated that OpenAI had begun renting Google TPUs for ChatGPT, Jensen called Altman, asking him if it was true, and signaled that he was open to getting the talks back on track (investment talks). Also worth noting was that Nvidia’s official X account posted a screenshot of an article in which OpenAI denied plans to use Google’s in-house chips. To say the least, Nvidia is watching TPUs very closely.

Ok, but after looking at some of these numbers, one might think, why aren’t more clients using TPUs?

Where are the problems for the wider adoption of TPUs

The main problem for TPUs adoption is the ecosystem. Nvidia’s CUDA is engraved in the minds of most AI engineers, as they have been learning CUDA in universities. Google has developed its ecosystem internally but not externally, as it has used TPUs only for its internal workloads until now. TPUs use a combination of JAX and TensorFlow, while the industry skews to CUDA and PyTorch (although TPUs also support PyTorch now). While Google is working hard to make its ecosystem more supportive and convertible with other stacks, it is also a matter of libraries and ecosystem formation that takes years to develop.

It is also important to note that, until recently, the GenAI industry’s focus has largely been on training workloads. In training workloads, CUDA is very important, but when it comes to inference, even reasoning inference, CUDA is not that important, so the chances of expanding the TPU footprint in inference are much higher than those in training (although TPUs do really well in training as well – Gemini 3 the prime example).

The fact that most clients are multi-cloud also poses a challenge for TPU adoption, as AI workloads are closely tied to data and its location (cloud data transfer is costly). Nvidia is accessible via all three hyperscalers, while TPUs are available only at GCP so far. A client who uses TPUs and Nvidia GPUs explains it well:

“Right now, the one biggest advantage of NVIDIA, and this has been true for past three companies I worked on is because AWS, Google Cloud and Microsoft Azure, these are the three major cloud companies.

Every company, every corporate, every customer we have will have data in one of these three. All these three clouds have NVIDIA GPUs. Sometimes the data is so big and in a different cloud that it is a lot cheaper to run our workload in whatever cloud the customer has data in.

I don’t know if you know about the egress cost that is moving data out of one cloud is one of the bigger cost. In that case, if you have NVIDIA workload, if you have a CUDA workload, we can just go to Microsoft Azure, get a VM that has NVIDIA GPU, same GPU in fact, no code change is required and just run it there.

With TPUs, once you are all relied on TPU and Google says, “You know what? Now you have to pay 10X more,” then we would be screwed, because then we’ll have to go back and rewrite everything. That’s why. That’s the only reason people are afraid of committing too much on TPUs. The same reason is for Amazon’s Trainium and Inferentia.” – source: AlphaSense

These problems are well known at Google, so it is no surprise that internally, the debate over keeping TPUs inside Google or starting to sell them externally is a constant topic. When keeping them internally, it enhances the GCP moat, but at the same time, many former Google employees believe that at some point, Google will start offering TPUs externally as well, maybe through some neoclouds, not necessarily with the biggest two competitors, Microsoft and Amazon. Opening up the ecosystem, providing support, etc., and making it more widely usable are the first steps toward making that possible.

A former Google employee also mentioned that Google last year formed a more sales-oriented team to push and sell TPUs, so it’s not like they have been pushing hard to sell TPUs for years; it is a fairly new dynamic in the organization.

Google’s TPU is the biggest competitive advantage of its cloud business for the next 10 years

The most valuable thing for me about TPUs is their impact on GCP. As we witness the transformation of cloud businesses from the pre-AI era to the AI era, the biggest takeaway is that the industry has gone from an oligopoly of AWS, Azure, and GCP to a more commoditized landscape, with Oracle, Coreweave, and many other neoclouds competing for AI workloads. The problem with AI workloads is the competition and Nvidia’s 75% gross margin, which also results in low margins for AI workloads. The cloud industry is moving from a 50-70% gross margin industry to a 20-35% gross margin industry. For cloud investors, this should be concerning, as the future profile of some of these companies is more like that of a utility than an attractive, high-margin business. But there is a solution to avoiding that future and returning to a normal margin: the ASIC.

The cloud providers who can control the hardware and are not beholden to Nvidia and its 75% gross margin will be able to return to the world of 50% gross margins. And there is no surprise that all three AWS, Azure, and GCP are developing their own ASICs. The most mature by far is Google’s TPU, followed by Amazon’s Trainum, and lastly Microsoft’s MAIA (although Microsoft owns the full IP of OpenAI’s custom ASICs, which could help them in the future).

While even with ASICs you are not 100% independent, as you still have to work with someone like Broadcom or Marvell, whose margins are lower than Nvidia’s but still not negligible, Google is again in a very good position. Over the years of developing TPUs, Google has managed to control much of the chip design process in-house. According to a current AMD employee, Broadcom no longer knows everything about the chip. At this point, Google is the front-end designer (the actual RTL of the design) while Broadcom is only the backend physical design partner. Google, on top of that, also, of course, owns the entire software optimization stack for the chip, which makes it as performant as it is. According to the AMD employee, based on this work split, he thinks Broadcom is lucky if it gets a 50-point gross margin on its part.

Without having to pay Nvidia for the accelerator, a cloud provider can either price its compute similarly to others and maintain a better margin profile or lower costs and gain market share. Of course, all of this depends on having a very capable ASIC that can compete with Nvidia. Unfortunately, it looks like Google is the only one that has achieved that, as the number one-performing model is Gemini 3 trained on TPUs. According to some former Google employees, internally, Google is also using TPUs for inference across its entire AI stack, including Gemini and models like Veo. Google buys Nvidia GPUs for GCP, as clients want them because they are familiar with them and the ecosystem, but internally, Google is full-on with TPUs.

As the complexity of each generation of ASICs increases, similar to the complexity and pace of Nvidia, I predict that not all ASIC programs will make it. I believe outside of TPUs, the only real hyperscaler shot right now is AWS Trainium, but even that faces much bigger uncertainties than the TPU. With that in mind, Google and its cloud business can come out of this AI era as a major beneficiary and market-share gainer.

Recently, we even got comments from the SemiAnalysis team praising the TPU:

“Google’s silicon supremacy among hyperscalers is unmatched, with their TPU 7th Gen arguably on par with Nvidia Blackwell. TPU powers the Gemini family of models which are improving in capability and sit close to the pareto frontier of $ per intelligence in some tasks” – source: SemiAnalysis

How many TPUs does Google produce today, and how big can that get?

Here are the numbers that I researched…

Continue reading at uncoveralpha.com

Tyler Durden

Mon, 11/24/2025 – 23:00

https://www.zerohedge.com/markets/chip-made-ai-inference-era-google-tpu

Andrej Stojaković scores 24 points as No. 13 Illinois beats Texas Rio Grande Valley 87-73

CHAMPAIGN — Andrej Stojaković scored 24 points for his fourth 20-point performance in five games, leading No. 13 Illinois to an 87-73 victory over Texas Rio Grande Valley on Monday night.

Mihailo Petrović had 12 points, and David Mirković and twins Zvonimir and Tomislav Ivišić each added 10 for the Illini (6-1). They are 6-0 at home.

Illinois has started the season scoring 80 or more points in all seven games. The Illini haven’t done that since the 1988-89 season, when the Fighting Illini, who lost in the national semifinals of the NCAA Tournament, opened with 12 consecutive games of 80 or more points.

Kye Dickson scored 21 points and Filip Brankovic had 15 for Texas Rio Grande Valley (2-4), which trailed 42-31 at halftime and cut the Illinois lead to six points twice early in the second half, but didn’t get any closer.

Stojaković scored 14 points in the first half.

Illinois, the top rebounding team in the Big Ten, outrebounded the Vaqueros. 44-32. Keaton Wagler and Ben Humrichous each had eight rebounds, Zvonimir Ivišić had seven, and Stojaković had six.

The Vaqueros went 16-15 last season. It was their first winning season since 2018-19.

Rio Grande Valley is a second-year member of the Southland Conference after spending 11 years in the Western Athletic Conference.

Illinois coach Brad Underwood went 53-1 against Southland Conference teams while winning three regular-season and three conference tournament championships from 2013-2016, when he was at Stephen F. Austin.

The Illini will face No. 5 UConn at Madison Square Garden in New York on Friday.

Tyler Herro anota 24 puntos en su debut de temporada y Heat supera 106-102 a Mavericks

MIAMI (AP) — Tyler Herro anotó 24 puntos en su debut de temporada tras recuperarse de una cirugía de tobillo, Kel’el Ware sumó 20 y 18 rebotes, y el Heat de Miami resistió un embate de los Mavericks de Dallas para ganar 106-102 el lunes por la noche.

Bam Adebayo consiguió 17 unidades para Miami, que fue contenido a casi 20 puntos por debajo de su promedio de la temporada, pero mejoró a 8-1 en casa.

P.J. Washington anotó 27 tantos para Dallas, que obtuvo 15 de Max Christie, 13 de Klay Thompson y 12 cada uno de Cooper Flagg y Brandon Williams.

Dallas iba perdiendo por 13 antes de poner el juego interesante al final.

Flagg encestó un par de tiros libres con 1:04 por jugar, empatando el juego a 102-102. Los Mavs lograron una parada en su siguiente posesión, pero Adebayo robó el pase de entrada y Herro anotó con 41,2 segundos restantes para poner a Miami de nuevo en ventaja.

Miami — jugando su tercer partido en cuatro noches — estuvo sin su máximo anotador Norman Powell (ingle), Andrew Wiggins (cadera) y Nikola Jovic (cadera).

El pívot de los Mavericks, Anthony Davis, se perdió su decimocuarto juego consecutivo por una distensión en la pantorrilla izquierda, aunque había sido actualizado a dudoso más temprano el lunes, indicando que había una pequeña posibilidad de que jugara. Dallas no juega de nuevo hasta el viernes — contra el antiguo equipo de Davis, los Lakers de Los Ángeles.

“Creo que sigue mejorando. Está trabajando para volver. Anticipamos que estará en la práctica esta semana. Creo que cualquier momento con una distensión en la pantorrilla hay que ser cauteloso. Pero ha trabajado extremadamente duro y el siguiente paso es la práctica el miércoles, así que veremos qué sucede”, dijo el entrenador de los Mavericks, Jason Kidd.

___

Deportes en español AP: https://apnews.com/hub/deportes

The UK And Canada Lead The West’s Descent Into Digital Authoritarianism

The UK And Canada Lead The West’s Descent Into Digital Authoritarianism

Authored by Sonia Elijah via The Brownstone Institute,

“Big Brother is watching you.”

These chilling words from George Orwell’s dystopian masterpiece, 1984, no longer read as fiction but are becoming a bleak reality in the UK and Canada—where digital dystopian measures are unravelling the fabric of freedom in two of the West’s oldest democracies.

Under the guise of safety and innovation, the UK and Canada are deploying invasive tools that undermine privacy, stifle free expression, and foster a culture of self-censorship. Both nations are exporting their digital control frameworks through the Five Eyes alliance, a covert intelligence-sharing network uniting the UK, Canada, US, Australia, and New Zealand, established during the Cold War.

Simultaneously, their alignment with the United Nations’ Agenda 2030, particularly Sustainable Development Goal (SDG) 16.9—which mandates universal legal identity by 2030—supports a global policy for digital IDs, such as the UK’s proposed Brit Card and Canada’s Digital Identity Program, which funnel personal data into centralized systems under the pretext of “efficiency and inclusion.” By championing expansive digital regulations, such as the UK’s Online Safety Act and Canada’s pending Bill C-8, which prioritize state-defined “safety” over individual liberties, both nations are not just embracing digital authoritarianism—they’re accelerating the West’s descent into it.

The UK’s Digital Dragnet

The United Kingdom has long positioned itself as a global leader in surveillance. The British spy agency, Government Communications Headquarters (GCHQ), runs the formerly secret mass surveillance programme, code-named Tempora, operational since 2011, which intercepts and stores vast amounts of global internet and phone traffic by tapping into transatlantic fibre-optic cables. Knowledge of its existence only came about in 2013, thanks to the bombshell documents leaked by the former National Security Agency (NSA) intelligence contractor and whistleblower, Edward Snowden. “It’s not just a US problem. The UK has a huge dog in this fight,” Snowden told the Guardian in a June 2013 report. “They [GCHQ] are worse than the US.”

Following that is the Investigatory Powers Act (IPA) 2016, also dubbed the “Snooper’s Charter,” which mandates that internet service providers store users’ browsing histories, emails, texts, and phone calls for up to a year. Government agencies, including police and intelligence services (like MI5, MI6, and GCHQ) can access this data without a warrant in many cases, enabling bulk collection of communications metadata. This has been criticized for enabling mass surveillance on a scale that invades everyday privacy.

Recent expansions under the Online Safety Act (OSA) further empower authorities to demand backdoors to encrypted apps like WhatsApp, potentially scanning private messages for vaguely defined “harmful” content—a move critics like Big Brother Watch, a privacy advocacy group, decry as a gateway to mass surveillance. The OSA, which received Royal Assent on October 26, 2023, represents a sprawling piece of legislation by the UK government to regulate online content and “protect” users, particularly children, from “illegal and harmful material.”

Implemented in phases by Ofcom, the UK’s communications watchdog, it imposes duties on a vast array of internet services, including social media, search engines, messaging apps, gaming platforms, and sites with user-generated content, forcing compliance through risk assessments and hefty fines. By July 2025, the OSA was considered “fully in force” for most major provisions. This sweeping regime, aligned with global surveillance trends via Agenda 2030’s push for digital control, threatens to entrench a state-sanctioned digital dragnet, prioritizing “safety” over fundamental freedoms.

Elon Musk’s platform X has warned that the act risks “seriously infringing” on free speech, with the threat of fines up to £18 million or 10% of global annual turnover for non-compliance, encouraging platforms to censor legitimate content to avoid punishment. Musk took to X to express his personal view on the act’s true purpose: “suppression of the people.”

In late September, Imgur (an image-hosting platform popular for memes and shared media) made the decision to block UK users rather than comply with the OSA’s stringent regulations. This underscores the chilling effect such laws can have on digital freedom.

The act’s stated purpose is to make the UK “the safest place in the world to be online.” However, critics argue that it’s a brazen power grab by the UK government to increase censorship and surveillance, all the while masquerading as a noble crusade to “protect” users.

Another pivotal development is the Data (Use and Access) Act 2025 (DUAA), which received Royal Assent in June. This wide-ranging legislation streamlines data protection rules to boost economic growth and public services but at the cost of privacy safeguards. It allows broader data sharing among government agencies and private entities, including for AI-driven analytics. For instance, it enables “smart data schemes” where personal information from banking, energy, and telecom sectors can be accessed more easily, seemingly for consumer benefits like personalized services—but raising fears of unchecked profiling.

Cybersecurity enhancements further expand the UK’s pervasive surveillance measures. The forthcoming Cyber Security and Resilience Bill, announced in the July 2024 King’s Speech and slated for introduction by year’s end, expands the Network and Information Systems (NIS) Regulations to critical infrastructure, mandating real-time threat reporting and government access to systems. This builds on existing tools like facial recognition technology, deployed extensively in public spaces. In 2025, trials in cities like London have integrated AI cameras that scan crowds in real time, linking to national databases for instant identification—evoking a biometric police state.

Source: BBC News

The New York Times reported: “British authorities have also recently expanded oversight of online speech, tried weakening encryption, and experimented with artificial intelligence to review asylum claims. The actions, which have accelerated under Prime Minister Keir Starmer with the goal of addressing societal problems, add up to one of the most sweeping embraces of digital surveillance and internet regulation by a Western democracy.”

Compounding this, UK police arrest over 30 people a day for “offensive” tweets and online messages, per The Times, often under vague laws, fuelling justifiable fears of Orwell’s thought police.

Yet, of all the UK’s digital dystopian measures, none has ignited greater fury than Prime Minister Starmer’s mandatory “Brit Card” digital ID—a smartphone-based system effectively turning every citizen into a tracked entity.

First announced on September 4, as a tool to “tackle illegal immigration and strengthen border security,” but rapidly the Brit Card’s scope ballooned through function-creep to envelop everyday essentials like welfare, banking, and public access. These IDs, stored on smartphones containing sensitive data like photos, names, dates of birth, nationalities, and residency status, are sold “as the front door to all kinds of everyday tasks,” a vision championed by the Tony Blair Institute for Global Change—and echoed by Work and Pensions Secretary Liz Kendall MP in her October 13 parliamentary speech.

🚨🇬🇧 DIGITAL ID: THE END OF PRIVACY DISGUISED AS “CONVENIENCE”

In Parliament yesterday, MPs pushed the Digital ID scheme, selling it as “modern” and “efficient.”

But behind the polished language lies a darker truth – total state control.

🔴 Every job, school record, medical… pic.twitter.com/Rb2DoBJxze

— British Intel (@TheBritishIntel) October 14, 2025

Source: TheBritishIntel

This digital shackles system has sparked fierce resistance across the UK. A scathing letter, led by independent MP Rupert Lowe and endorsed by nearly 40 MPs from diverse parties, denounces the government’s proposed mandatory “Brit Card” digital ID as “dangerous, intrusive, and profoundly un-British.” Conservative MP David Davis issued a stark warning, declaring that such systems “are profoundly dangerous to the privacy and fundamental freedoms of the British people.”

On X, Davis amplified his critique, citing a £14m fine imposed on Capita after hackers breached pension savers’ personal data, writing: “This is another perfect example of why the government’s digital ID cards are a terrible idea.” By early October, a petition opposing the proposal had garnered over 2.8 million signatures, reflecting widespread public outcry. The government, however, dismissed these objections, stating, “We will introduce a digital ID within this Parliament to address illegal migration, streamline access to government services, and improve efficiency. We will consult on details soon.”

Canada’s Surveillance Surge

Across the Atlantic, Canada’s surveillance surge under Prime Minister Mark Carney—former Bank of England head and World Economic Forum board member—mirrors the UK’s dystopian trajectory. Carney, with his globalist agenda, has overseen a slew of bills that prioritize “security” over sovereignty. Take Bill C-2, An Act to amend the Customs Act, introduced June 17, 2025, which enables warrantless data access at borders and sharing with US authorities via CLOUD Act (Clarifying Lawful Overseas Use of Data Act) pacts—essentially handing Canadian citizens’ digital lives to foreign powers. Despite public backlash prompting proposed amendments in October, its core—enhanced monitoring of transactions and exports—remains ripe for abuse.

Complementing this, Bill C-8, first introduced June 18, 2025, amends the Telecommunications Act to impose cybersecurity mandates on critical sectors like telecoms and finance. It empowers the government to issue secret orders compelling companies to install backdoors or weaken encryption, potentially compromising user security. These orders can mandate the cutoff of internet and telephone services to specified individuals without the need for a warrant or judicial oversight, under the vague premise of securing the system against “any threat.”

Opposition to this bill has been fierce. In a parliamentary speech, Canada’s Conservative MP Matt Strauss decried the bill’s sections 15.1 and 15.2 as granting “unprecedented, incredible power” to the government. He warned of a future where individuals could be digitally exiled—cut off from email, banking, and work—without explanation or recourse, likening it to a “digital gulag.”

‼️ MUST WATCH: CANADA HAS GONE 100% COMMUNIST ‼️ Introduced on October 1, Bill C-8 will effectively end people’s lives on demand. If you say the ‘wrong thing’, the government will be able to immediately cut your internet and telephone. pic.twitter.com/HeOHg3CSLE

— Andrew Bridgen (@ABridgen) October 6, 2025

Source: Video shared by Andrew Bridgen

The Canadian Constitution Foundation (CCF) and privacy advocates have echoed these concerns, arguing that the bill’s ambiguous language and lack of due process violate fundamental Charter rights, including freedom of expression, liberty, and protection against unreasonable search and seizure.

Bill C-8 complements the Online Harms Act (Bill C-63), first introduced in February 2024, which demanded platforms purge content like child exploitation and hate speech within 24 hours, risking censorship with vague “harmful” definitions. Inspired by the UK’s OSA and the EU’s Digital Services Act (DSA), C-63 collapsed amid fierce backlash for its potential to enable censorship, infringe on free speech, and lack of due process. The CCF and Pierre Poilievre, calling it “woke authoritarianism,” led a 2024 petition with 100,000 signatures. It died during Parliament’s January 2025 prorogation after Justin Trudeau’s resignation.

These bills build on an alarming precedent: during the Covid era, Canada’s Public Health Agency admitted to tracking 33 million devices during lockdown—nearly the entire population—under the pretext of public health, a blatant violation exposed only through persistent scrutiny. The Communications Security Establishment (CSE), empowered by the longstanding Bill C-59, continues bulk metadata collection, often without adequate oversight. These measures are not isolated; they stem from a deeper rot, where pandemic-era controls have been normalized into everyday policy.

Canada’s Digital Identity Program, touted as a “convenient” tool for seamless access to government services, emulates the UK’s Brit Card and aligns with UN Agenda 2030’s SDG 16.9. It remains in active development and piloting phases, with full national rollout projected for 2027–2028.

“The price of freedom is eternal vigilance.” Orwell’s 1984 warns we must urgently resist this descent into digital authoritarianism—through petitions, protests, and demands for transparency—before a Western Great Firewall is erected, replicating China’s stranglehold that polices every keystroke and thought.

Republished from the author’s Substack

Tyler Durden

Mon, 11/24/2025 – 22:35

https://www.zerohedge.com/political/uk-and-canada-lead-wests-descent-digital-authoritarianism

Los Pistons logran su 13ra victoria seguida, igualando récord del equipo al ganar 122-117 a Pacers

INDIANÁPOLIS (AP) — Cade Cunningham anotó 24 puntos y los acompañó con 11 rebotes y los Pistons de Detroit ganaron su decimotercer partido consecutivo para igualar el récord de la franquicia, superando 122-117 a los Pacers de Indiana el lunes por la noche.

Los Pistons igualaron las rachas ganadoras de sus equipos campeones de 1989-90 y 2003-04, dos temporadas después de perder 28 partidos seguidos para romper el récord de la temporada de la NBA y empatar la marca general. Detroit, líder de la Conferencia Este, tiene una marca de 15-2.

Con una desventaja de 18 puntos al inicio del último cuarto, los Pistons se acercaron a solo dos unidades. Bennedict Mathurin falló un triple con la oportunidad de empatar a 11 segundos del final.

Caris LeVert añadió 19 tantos para Detroit, y Jalen Duren tuvo 17 puntos y 12 rebotes. Jaden Ivey terminó con 12 unidades en su segundo partido de regreso después de romperse el peroné izquierdo en enero.

Pascal Siakam tuvo 24 puntos para Indiana, que está plagado de lesiones. Jarace Walker añadió 21. Los Pacers han perdido diez de 11 partidos, cayendo a un récord de 2-15.

Indiana ha estado perdido sin Tyrese Haliburton, el guardia estrella que se rompió el tendón de Aquiles derecho en la derrota de los Pacers en el séptimo juego contra Oklahoma City en las Finales de la NBA.

Detroit superó a Indiana 36-23 en el segundo cuarto para una ventaja de 71-55. Los Pistons lanzaron un 58.5% desde el campo en la mitad, acertando siete de 14 triples.

Los Pistons lideraban 101-88 después de tres cuartos.

___

Deportes en español AP: https://apnews.com/hub/deportes

JP Morgan, Who Had No Issues Banking Epstein, Abruptly Closes Strike CEO Jack Mallers’ Account

JP Morgan, Who Had No Issues Banking Epstein, Abruptly Closes Strike CEO Jack Mallers’ Account

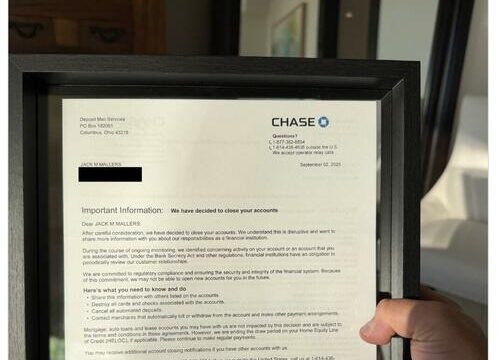

JPMorgan Chase abruptly closed Strike CEO Jack Mallers’ personal accounts last month, giving him no warning and offering only a cryptic explanation, according to Yahoo Finance.

Mallers posted on X that “Last month, J.P. Morgan Chase threw me out of the bank,” noting how odd it was given that “My dad has been a private client there for 30+ years.” When he asked why, the bank told him only: “We aren’t allowed to tell you.”

Yahoo writes that he even framed the closure letter, which accused him of unspecified “concerning activity” and warned the bank “may not be able to open new accounts for you in the future.”

The incident reignited concerns that the alleged Biden-era “Operation Chokepoint 2.0” is still lurking in the background, despite Trump’s new executive order aimed at penalizing firms that debank crypto businesses. Critics online immediately connected the dots, suggesting regulators and banks are still quietly squeezing crypto-aligned companies and founders.

JPMorgan’s move sparked a broader backlash from Bitcoin advocates like Grant Cardone, Max Keiser, and others who are already furious over the bank’s perceived hostility toward Bitcoin and its recent push to delist companies with heavy BTC exposure. Many publicly closed their JPMorgan accounts, accusing the bank of targeting the crypto sector while having no trouble maintaining far more questionable clients in the past. (Apparently “concerning activity” was never a problem back when they were happily banking Epstein.)

Tether CEO Paolo Ardoino replied to Mallers that the whole ordeal is “for the best,” later adding that organizations trying to undermine Bitcoin “will fail and become dust.” Meanwhile, JPMorgan insists it’s just protecting the “security and integrity of the financial system”—a claim that might land better if the bank’s compliance radar didn’t seem to activate only when the customer is a crypto CEO rather than, say, a notorious sex-trafficking financier.

Recall just days ago we wrote that the bank is now under fire from Florida officials over its cooperation with the Biden DOJ’s anti-Trump investigation known as “Arctic Frost,” – providing sensitive banking information to Biden prosecutor Jack Smith.

Also we noted US regulators are examining whether JPMorgan Chase has denied customers fair access to banking, as pressure grows over debanking decisions that were made against conservative figures, according to reporting from Financial Times and the company’s 10-Q filing.

In its quarterly filing, the bank noted it was “responding to requests from government authorities and other external parties regarding, among other things, the firm’s policies and processes and the provision of services to customers and potential customers”.

JPMorgan linked the scrutiny to an August executive order from Donald Trump directing regulators to review possible “politicised or unlawful debanking”. The bank said related inquiries include “reviews, investigations and legal proceedings,” without identifying the agencies involved.

Tyler Durden

Mon, 11/24/2025 – 22:10

Brandon Ingram anota 37 puntos y Raptors vencen 110-99 a Cavaliers para su octava victoria al hilo

TORONTO (AP) — Brandon Ingram anotó 15 de sus 37 puntos, la cifra más alta de la temporada, en el tercer cuarto, Scottie Barnes sumó 18 unidades y 11 rebotes, y los Raptors de Toronto vencieron a los Cavaliers de Cleveland 110-99 el lunes por la noche para su octava victoria consecutiva.

Sandro Mamukelashvili anotó 12 tantos e Immanuel Quickley tuvo 11 para Toronto, que ha ganado 12 de 13.

Dos de las victorias de los Raptors en su racha han sido contra los Cavaliers. Toronto barrió la serie de la temporada por primera vez desde la campaña 2019-20.

Por los Cavaliers Donovan Mitchell tuvo 17 puntos pero lanzó seis de 20, acertando tres de 12 triples.

Jaylon Tyson anotó 15, mientras Evan Mobley y Nae’Qwan tuvieron cada uno 14 para Cleveland. Lonzo Ball lanzó tres de 15, fallando diez de 12 intentos desde larga distancia para terminar con ocho unidades.

Toronto cerró la primera mitad con una racha de 13-2 para liderar 57-54 en el descanso.

Los Raptors jugaron sin RJ Barrett, quien tiene un esguince en la rodilla derecha. Barrett dejó la victoria del domingo sobre Brooklyn en el tercer cuarto después de aterrizar de manera incómoda en una volcada en un contraataque. Las pruebas no revelaron una lesión grave, dijo el entrenador Darko Rajakovic.

Ingram conectó seis de 12 intentos en el tercero. Su última canasta, un triple con 1:20 por jugar en el período, le dio a Toronto su mayor ventaja con 88-74.

Darius Garland de Cleveland (dolor en el dedo gordo del pie izquierdo) y De’Andre Hunter (descanso) se sentaron en la segunda noche de juegos consecutivos. Jakob Poeltl de Toronto regresó después de no jugar el domingo debido a un dolor en la parte baja de la espalda. Poeltl tuvo 13 rebotes.

Jarrett Allen, quien se perdió su tercer juego consecutivo debido a un dedo torcido en su mano derecha, fue uno de los siete jugadores de los Cavs fuera por lesiones.

___

Deportes en español AP: https://apnews.com/hub/deportes

Google Denies Claims That It’s Reading Gmails To Train Its AI

Google Denies Claims That It’s Reading Gmails To Train Its AI

Authored by Jack Phillips via The Epoch Times (emphasis ours),

Google is denying viral claims that private Gmail emails are being used to train its AI models.

The announcement follows multiple reports this past week that the company has rolled out such features.

In a post issued on Nov. 21, Gmail said that it wanted to “set the record straight on recent misleading reports.” It listed several points, saying, “We have not changed anyone’s settings,” Gmail’s “smart features” have existed for years, and, “We do not use your Gmail content to train our Gemini AI model.”

“We are always transparent and clear if we make changes to our terms [and] policies,” Google said.

The claims about Google included a post from cybersecurity company MalwareBytes, about which the company later issued a correction. Separately, a post on X from a YouTube content creator received around 150,000 likes. It contained similar claims that users were automatically opted into allowing Google to use Gmail emails to train its AI models.

“We’ve updated this article after realizing we contributed to a perfect storm of misunderstanding around a recent change in the wording and placement of Gmail’s smart features,” MalwareBytes said in its correction.

“The settings themselves aren’t new, but the way Google recently rewrote and surfaced them led a lot of people (including us) to believe Gmail content might be used to train Google’s AI models, and that users were being opted in automatically.”

The company noted that “after taking a closer look at Google’s documentation and reviewing other reporting, that doesn’t appear to be the case.”

Google has maintained on several of its blogs that it would protect user privacy regarding its Gemini AI models.

“Your data stays in Workspace,” says a company policy page. “We do not use your Workspace data to train or improve the underlying generative AI and large language models that power Gemini, Search, and other systems outside of Workspace without permission.”

It adds that for some features, including “accepting or rejecting spelling suggestions, or reporting spam,” suggestions are rendered anonymous or aggregated and could be used in “new features we are currently developing, like improved prompt suggestions that help Workspace users get the best results from Gemini features.”

“These features are developed with strict privacy protections that keep users in control,” the company says.

The smart features program for Gmail allows automated email filtering or categorization, automated composition of text in email, or suggests quick replies to emails, according to the company.

To determine whether the features are turned on or off, users can open Gmail on a desktop or mobile app and click on the gear icon before proceeding to See All Settings on desktop or Settings on mobile.

Then they can go to a section called smart features in Gmail, Chat, and Meet. To turn the features on or off, users can check or uncheck the box that says “Turn on smart features in Gmail, Chat, and Meet.”

Tyler Durden

Mon, 11/24/2025 – 21:45

https://www.zerohedge.com/technology/google-denies-claims-its-reading-gmails-train-its-ai